I am a PhD candidate at HKU, supervised by Lingpeng Kong.

My current research interests including diffusion language models and long context language models. I’m trying to explore different kinds of generation paradigms for better controllability and reasoning capacity.

Previouly, I work at Shark-NLP Shanghai AI Lab as a NLP researcher. I graduated from Shanghai Jiao Tong University (SJTU), supervised by Kenny Zhu. I used to work at pose estimation, face recognition, hierarchical text classification and recommendation systems.

➡️ See my Resumé (update in Sep 2025)

“I can only show you the door, you’re the one that has to walk through it” – Morpheus (The Matrix)

📚 Selected Publications

* indicates equal contribution. (Update in Sep 2025)

Diffusion for text

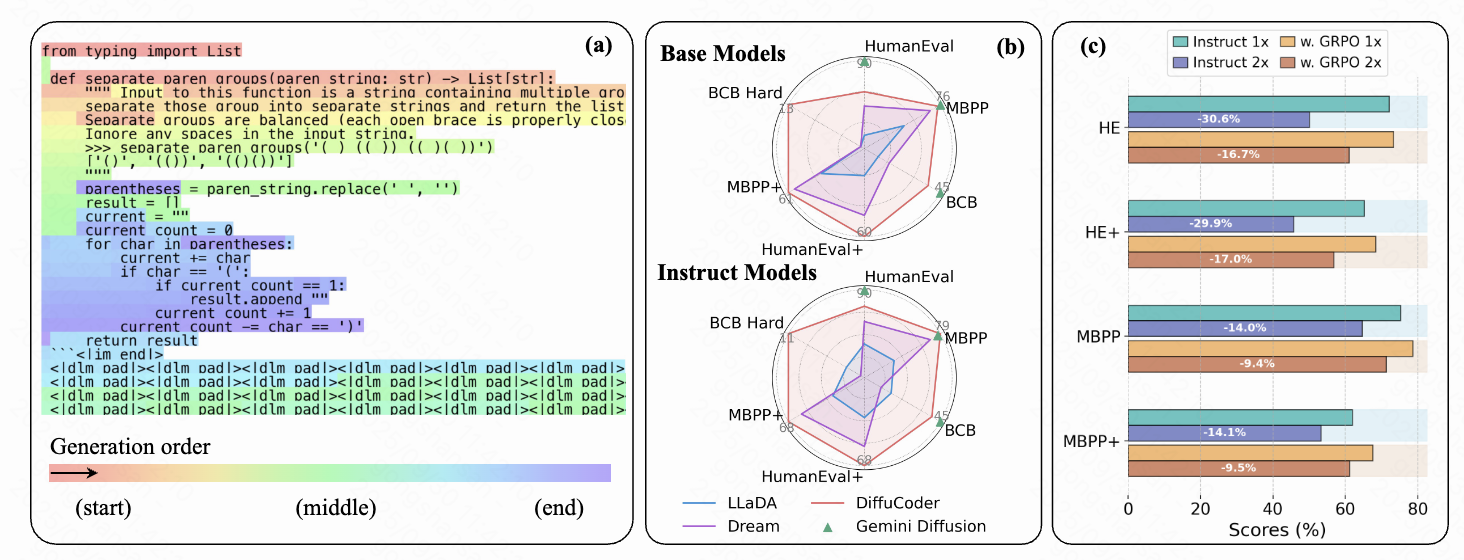

DiffuCoder: Understanding and Improving Masked Diffusion Models for Code Generation (preprint)

Shansan Gong, Ruixiang Zhang, Huangjie Zheng, Jiatao Gu, Navdeep Jaitly, Lingpeng Kong, Yizhe Zhang

DiffuCoder

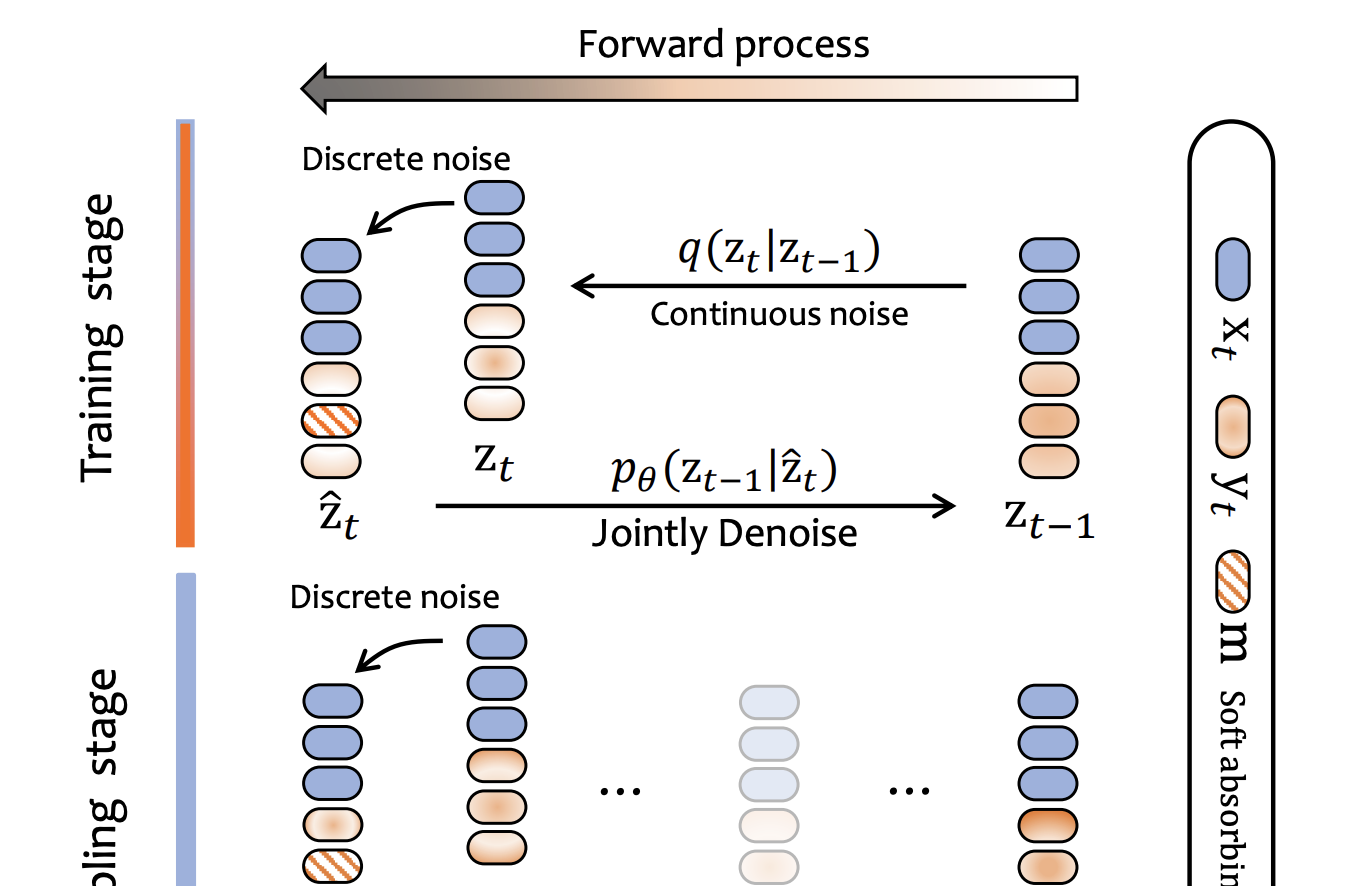

Continuously Augmented Discrete Diffusion model for Categorical Generative Modeling (preprint)

Huangjie Zheng, Shansan Gong, Ruixiang Zhang, Tianrong Chen, Jiatao Gu, Mingyuan Zhou, Navdeep Jaitly, Yizhe Zhang

We propose CADD, a framework that augments the discrete state space with a paired diffusion in a continuous latent space.

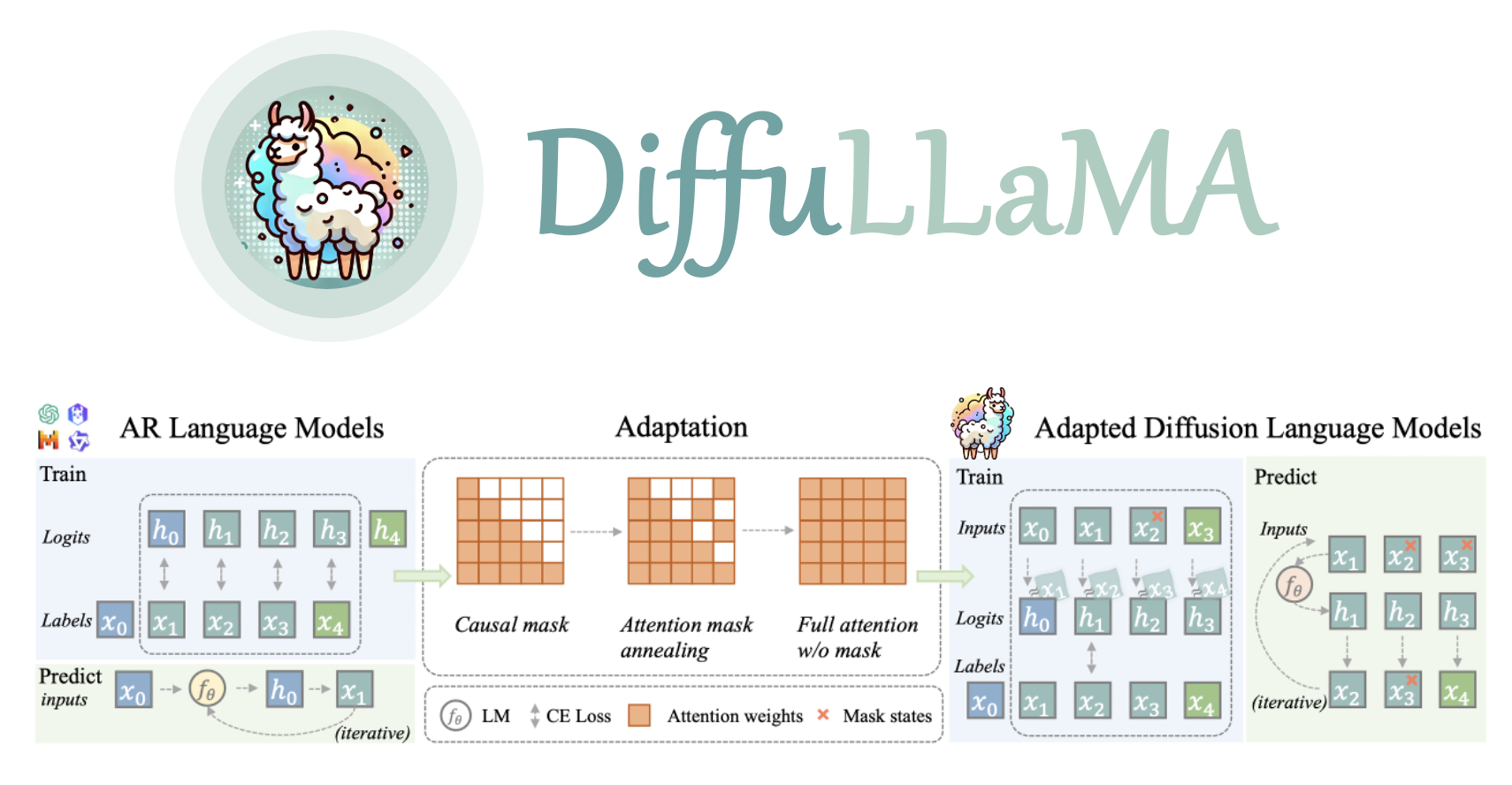

Scaling Diffusion Language Models via Adaptation from Autoregressive Models (ICLR 2025)

Shansan Gong*, Shivam Agarwal*, Yizhe Zhang, Jiacheng Ye, Lin Zheng, Mukai Li, Chenxin An, Peilin Zhao, Wei Bi, Jiawei Han, Hao Peng, Lingpeng Kong

DiffuLLaMA

Beyond Autoregression: Discrete Diffusion for Complex Reasoning and Planning (ICLR 2025)

Jiacheng Ye, Jiahui Gao, Shansan Gong, Lin Zheng, Xin Jiang, Zhenguo Li, Lingpeng Kong

Code

Diffusion of Thoughts: Chain-of-Thought Reasoning in Diffusion Language Models (NeurIPS 2024)

Jiacheng Ye*, Shansan Gong*, Liheng Chen*, Lin Zheng, Jiahui Gao, Han Shi, Chuan Wu, Zhenguo Li, Wei Bi, Lingpeng Kong

DoT

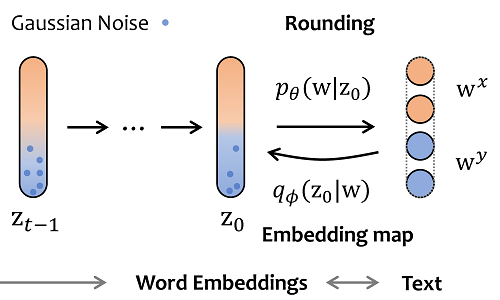

DiffuSeq-v2: Bridging Discrete and Continuous Text Spaces for Accelerated Seq2Seq Diffusion Models

Shansan Gong, Mukai Li, Jiangtao Feng, Zhiyong Wu, Lingpeng Kong

Code| Accelerated version of DiffuSeq, where the discrete noise bridges the training and sampling stages, saving time consumption of these two stages.

DiffuSeq: Sequence to Sequence Text Generation With Diffusion Models

Shansan Gong, Mukai Li, Jiangtao Feng, Zhiyong Wu, Lingpeng Kong

DiffuSeq

Long context language models

GIRAFFE: Design Choices for Extending the Context Length of Visual Language Models (ACL 2025)

Mukai Li, Lei Li, Shansan Gong, Qi Liu

GIRAFFE | Explore design choices to extend the context window of existing VLMs.

L-Eval: Instituting Standardized Evaluation for Long Context Language Models (ACL 2024 Outstanding)

Chenxin An, Shansan Gong, Ming Zhong, Mukai Li, Jun Zhang, Lingpeng Kong, Xipeng Qiu

L-Eval

Training-Free Long-Context Scaling of Large Language Models (ICML 2024)

Chenxin An, Fei Huang, Jun Zhang, Shansan Gong, Xipeng Qiu, Chang Zhou, Lingpeng Kong

ChunkLlama

In-Context Learning with Many Demonstration Examples

Mukai Li, Shansan Gong, Jiangtao Feng, Yiheng Xu, Jun Zhang, Zhiyong Wu, Lingpeng Kong

EVALM | The pre-trained language model with efficient attention and 8k context length.

Before LLMs

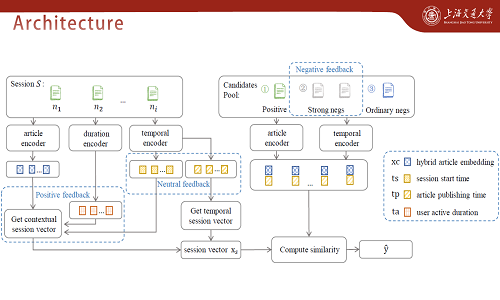

Positive, Negative and Neutral: Modeling Implicit Feedback in Session-based News Recommendation

Shansan Gong, Kenny Q. Zhu

TCAR

🎊 Honors and Awards

- 2024 ACL, Outstanding Paper

- 2024 Tencent Rhino-bird Research Elite Program, Outstanding Student

- 2022 SIGIR Student Travel Award

- 2022 Outstanding Graduate in Shanghai Municipality

- 2019 Outstanding Undergraduate in SJTU

💬 Invited Talks

- 2023.06, DiffuSeq, Youth PhD Talk-ICLR 2023 by AI Time. | [Slides]

- 2023.05, Incorporate Diffusion Models into Conditional Text Generation, Global Lunch Seminar at SJTU CS department. | [Slides]

📖 Educations

- 2019.06 - 2022.03, Master, Computer Science, SEIEE, Shanghai Jiao Tong University.

- 2015.09 - 2019.06, Undergraduate, Information Engineering, SEIEE, Shanghai Jiao Tong University.

💻 Internship

- 2025.01 - 2025.08, Research Intern, Diffusion Text Generation, Apple MLR

, Seattle.

- 2023.11 - 2024.10, Research Intern, Diffusion Text Generation, Tencent AI Lab

, Shenzhen.

- 2021.12 - 2022.03, RE Intern, Product Categorization, Meituan

, Shanghai.

- 2021.06 - 2021.10, SDE Intern, Bing Search Optimization, Microsoft STCA

, Beijing.

- 2019.12 - 2022.03, CTO, iWenBooks APP Development, Yousheng Tech Inc

, Shanghai.

📌 Services

- Conference Reviewer: COLING2022, ACL2023, NeurIPS2023-, EMNLP2023, ICLR2024-, ARR2024-

- Journal Reviewer: ACM Computing Surveys, IEEE Journals

- TA at HKU: COMP2121 (Discrete math), COMP7104 (Advanced database systems)

- One of the hosts of HKU Seminar

All those moments will be lost in time, like tears in rain. – Blade Runner